Building the Ultimate AWS FinOps Chatbot: Democratizing Cloud Cost Management with Generative AI

Discover how Railsfactory built an open-source AWS FinOps chatbot using LangGraph, MCP servers, and Generative AI to simplify cloud cost management and optimization.

Sabarish R

Senior DevOps Consultant

Cloud computing has revolutionized how we build software, but it has also introduced a massive headache for engineering and finance teams: cloud costs are incredibly hard to track, optimize, and predict, making effective cloud cost management a major challenge for engineering teams.

If you've ever stared at an AWS Cost Explorer dashboard trying to figure out why your bill spiked 20% overnight or wrestled with complex tags to find unused EC2 instances, you know the struggle. FinOps shouldn't require a PhD in AWS billing.

At Railsfactory, we strongly believe that managing cloud infrastructure shouldn't be an opaque, frustrating process. That’s exactly why our team built the AWS FinOps Chatbot, an open-source, AI-driven cloud cost management tool designed to seamlessly analyze AWS billing, optimize costs, and track resource usage through a natural conversational interface.

As part of our ongoing commitment to contributing to the open-source community, we are thrilled to share this project with you. In this post, we'll walk you through why we started this project, the architecture behind it, and how your team can spin it up to start chatting with your AWS data in minutes.

Why We Started This Project

The idea for this project was born out of a few recurring pain points we noticed across the industry and while helping our clients scale:

Information Silos: To accurately determine cloud costs and get a full picture of cloud infrastructure, teams typically need to stitch together data from AWS Cost Explorer, CloudWatch metrics, CloudTrail logs, and pricing documentation. It is tedious and time-consuming.

Accessibility: Non-technical stakeholders (like Finance or Operations teams) often struggle to navigate the AWS Console. They need answers quickly without needing to learn AWS-specific querying languages.

Data Privacy & Security: Many organizations are hesitant to use third-party SaaS FinOps tools because they don't want to expose their sensitive billing and infrastructure data to external vendors.

The "LLM Hallucination" Problem: Generic AI chatbots are great, but they often hallucinate when asked specific, real-time questions about live infrastructure.

We wanted to build an open-source solution that modernizes cloud cost management by combining the reasoning capabilities of Large Language Models (LLMs) with the absolute precision of live AWS APIs, all while running securely within an organization's own environment.

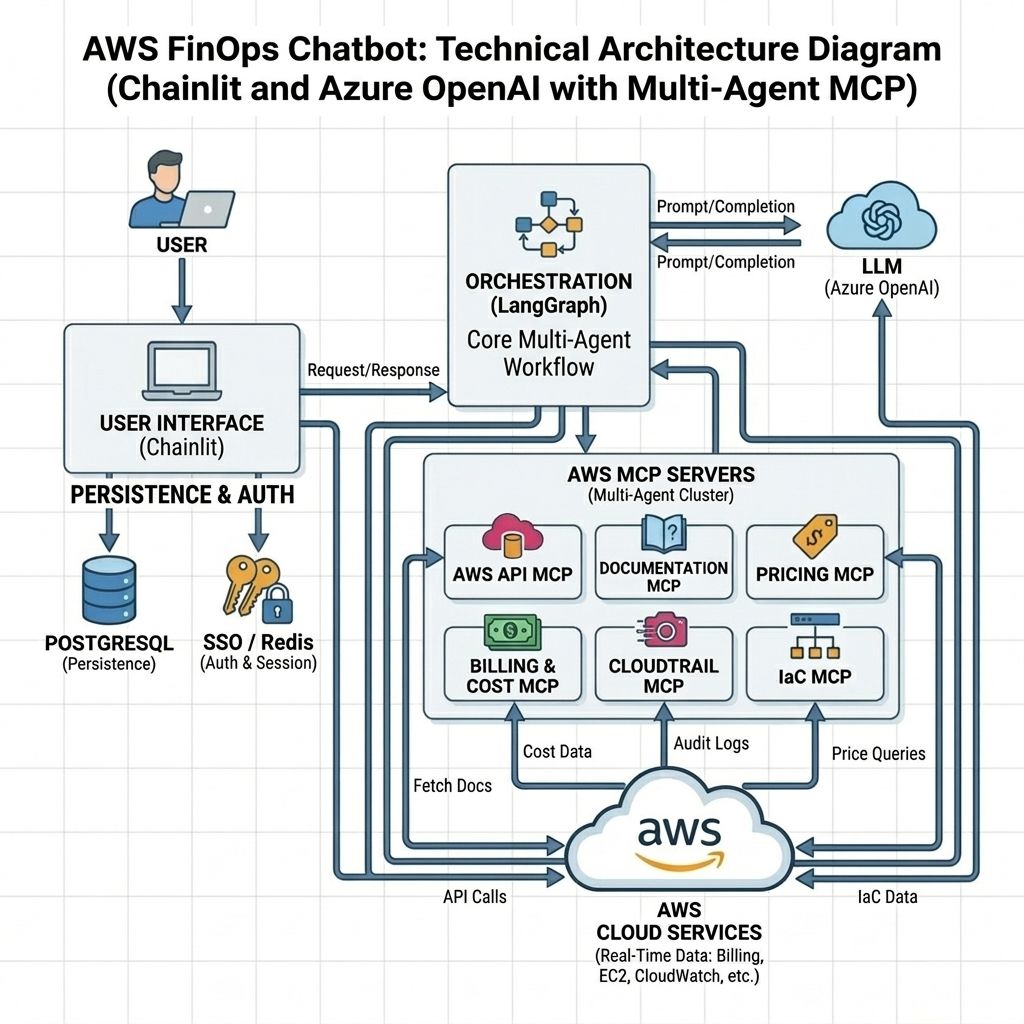

Architecture Behind the AI-Powered Cloud Cost Management Tool

To solve these problems, our engineering team architected the application using a modern, scalable AI stack. Here is a breakdown of what powers the AWS FinOps Chatbot:

1. LangGraph for Robust Orchestration

At the core of the bot is LangGraph, which handles the state and workflow. Instead of using a simple, unconstrained LLM loop, LangGraph uses an event-driven StateGraph that strictly coordinates the AI agent and the tools it calls. It ensures that the LLM processes data iteratively and reliably, preventing endless loops and maintaining proper context.

2. Model Context Protocol (MCP) Servers

To prevent AI hallucinations, the chatbot doesn't guess your costs, but it fetches them in real-time. It leverages 6 specialized AWS MCP Servers to bridge the LLM with your AWS account securely:

-

Billing & Cost Management MCP: Accesses native Cost Explorer insights, historical invoices, and savings plans.

-

AWS API MCP: Interacts directly with AWS APIs (EC2, CloudWatch, S3) to check resource states.

-

Pricing & Documentation MCPs: Fetches up-to-date pricing APIs and architectural best practices.

-

CloudTrail & IaC MCPs: Audits user activity and reads infrastructure-as-code configurations.

3. Flexible AI Backends (Azure OpenAI & Local Ollama)

Privacy is a massive priority in FinOps. The bot natively supports Azure OpenAI for high-performance enterprise setups, making it a highly scalable cloud cost optimization tool for modern FinOps teams. It's important to note that this application works best with Azure OpenAI or similar paid, state-of-the-art models. Because LangGraph and the MCP ecosystem require executing multiple complex tool calls and making advanced routing decisions simultaneously, local open-weight models (like those running via Ollama) can sometimes struggle to maintain context and structure.

However, for fully air-gapped or privacy-conscious organizations, we still offer seamless integration with local Ollama models (like the Qwen 4B+ family) so your cloud data never has to leave your local network.

4. Chainlit Web UI with Real-time Streaming

The frontend is built on Chainlit, providing a sleek, ChatGPT-like interface that simplifies day-to-day cloud cost management workflows. Besides, it supports Markdown, tables, dynamic action buttons, and token-by-token streaming, making the user experience incredibly fluid.

5. Strict Guardrails

You don't want someone asking a FinOps bot to "drop the database." The project includes a robust Guardrails engine that enforces:

-

Account & Service Allowlists: Only allowed AWS services can be queried.

-

Budget Enforcements: Rejects queries if the inferred session cost is too high.

-

Content Scanning: Blocks sensitive terminology and prompt injections.

Key Capabilities in Action

So, what can your team actually do with this AI-powered cloud cost management tool?

-

Cost Breakdown & Forecasting: Ask the bot, "Show my monthly AWS spend trend for the last 6 months." It will query the Billing MCP and render a beautiful Markdown table of your costs.

-

Resource Rightsizing: Tell it to, "Find EC2 instances with low CPU utilization over the last week." It correlates CloudWatch metrics with your active instances.

-

Security Auditing: Ask, "Who modified the production S3 bucket yesterday?" to pull real-time CloudTrail logs.

-

Interactive Follow-ups: After every response, the bot dynamically generates Action Buttons (e.g., "Show EC2 breakdown by tag"). Clicking these instantly triggers the next logical query without anyone needing to type it out.

Deploying an Open-Source Cloud Cost Management Solution

We wanted to make deploying this as painless as possible for the open-source community. The entire stack (Chainlit UI, Redis, Postgres, MCP Servers, and Localstack for local S3 emulation) is fully Dockerized.

Prerequisites

• Docker and Docker Compose installed.

• AWS IAM Credentials (Access Key/Secret) with appropriate read permissions.

• An Azure OpenAI key OR a running local Ollama instance.

Step 1: Clone and Configure

Clone the repository and set up your environment variables:

git clone --depth 1 https://github.com/SedinTechnologies/aws-fin-ops-chatbot.git

cd aws-fin-ops-chatbot

Before performing the below steps make sure you update the .env files under secrets folder.

Step 2: Run Database Migrations

Set up the required PostgreSQL tables for the Chainlit datalayer:

docker compose up data-migration

Step 3: Spin Up the Stack

Start the entire ecosystem in the background:

docker compose up --build -d

Step 4: Create a User and Log In

Generate a local user in the Redis cache:

docker compose exec -it chainlit-ui bash -c "USER_ID='admin' DISPLAY_NAME='Admin' PASSWORD='password123' python scripts/signup.py"

Conclusion: Simplifying Cloud Cost Management with AI

Building the AWS FinOps Chatbot has been an incredible journey into the intersection of Generative AI, LangGraph orchestration, and strict cloud security. By grounding LLMs with real-time AWS API data via MCP servers, we hope to help teams transform a daunting AWS bill into an interactive, actionable conversation.

Whether you are an engineer trying to optimize an architecture, or a FinOps analyst doing monthly reporting, this tool is built to save your organization time and money while simplifying long-term cloud cost management across teams. Because we have made this project fully open-source, you are completely free to tweak, fork, or modify the features to fit your organization's exact needs.

Check out the full source code and documentation on GitHub here: aws finops chatbot We’d love to hear the community’s thoughts, feedback, or any features you’d like to see next. Feel free to star the repo, open an issue, or drop a comment on GitHub.

At Railsfactory, we continue to help organizations build scalable cloud, DevOps, and infrastructure solutions that simplify operations while improving performance, reliability, and cost efficiency. If your team is exploring cloud modernization, FinOps optimization, or AI-driven infrastructure management, our experts are always happy to help you navigate the right approach.